The orbital data center power problem — and how to solve it

Orbital data centers (ODCs) have exploded in popularity. Numerous tech giants including Google, Amazon, and SpaceX, as well as venture-backed startups, are predicting a future where vast numbers of data centers will orbit several hundred miles above our heads and are investing accordingly.

But orbital data centers can’t scale to gigawatt-class AI infrastructure unless the fundamental power bottleneck is solved.

Spacecraft today are dramatically undersized for the power needs of AI-ready compute. The average satellite today generates less than 1 kW of average power, which isn’t even enough to power a single AI-class NVIDIA GPU, let alone the tens of thousands to millions of GPUs needed within each orbital data center.

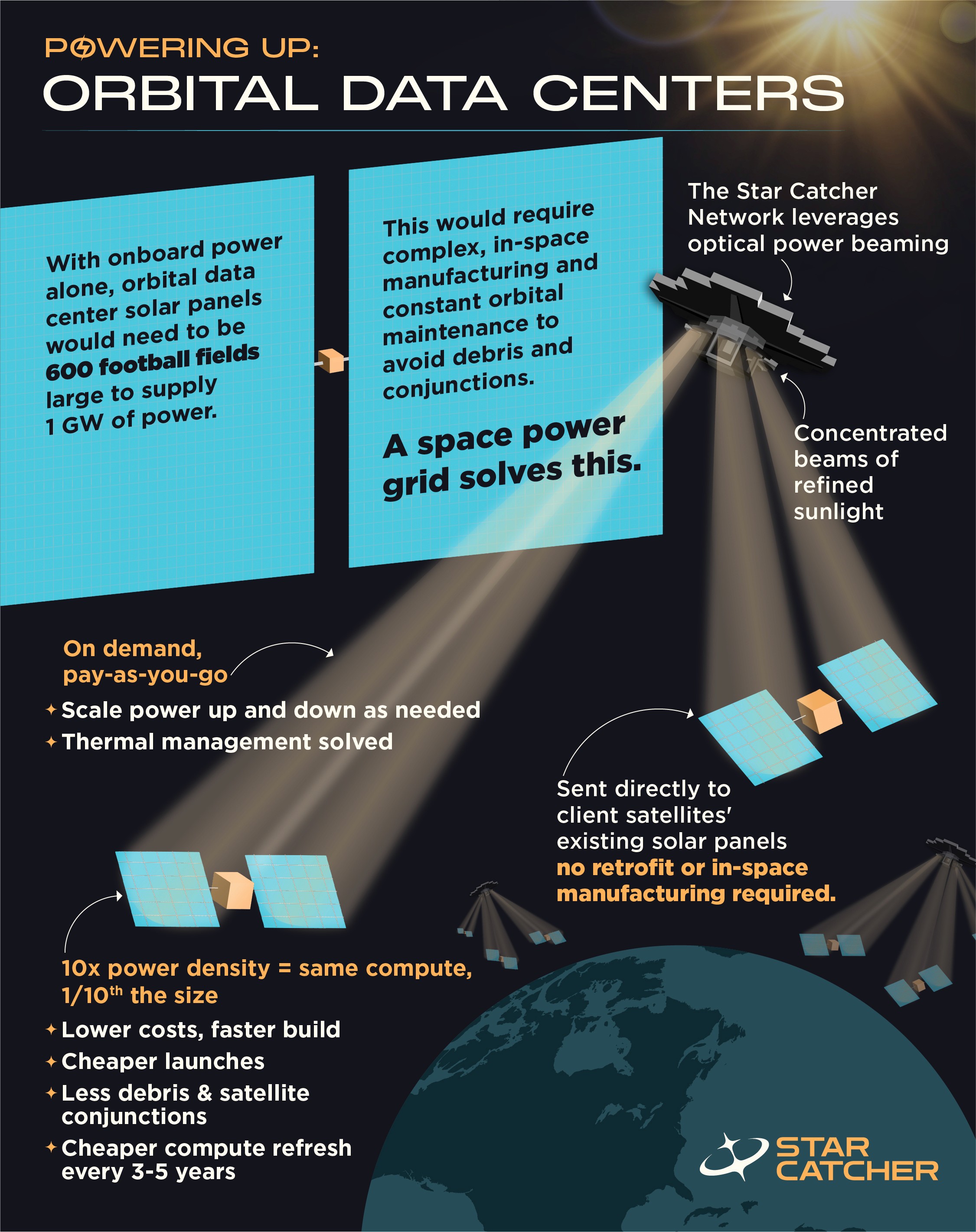

By turning power into shared infrastructure via an optical power beaming orbital energy grid, Star Catcher shifts space data centers from a long-term, capital-intensive bet into a near-term, scalable industry that enables players from small to large to compute and compete.

Star Catcher’s beamed power increases electricity generation by up to 10x without retrofit, enabling:

Smaller solar arrays with reduced collision risk

Lower-cost satellites that are faster to deploy

Flexible, on-demand operations that scale

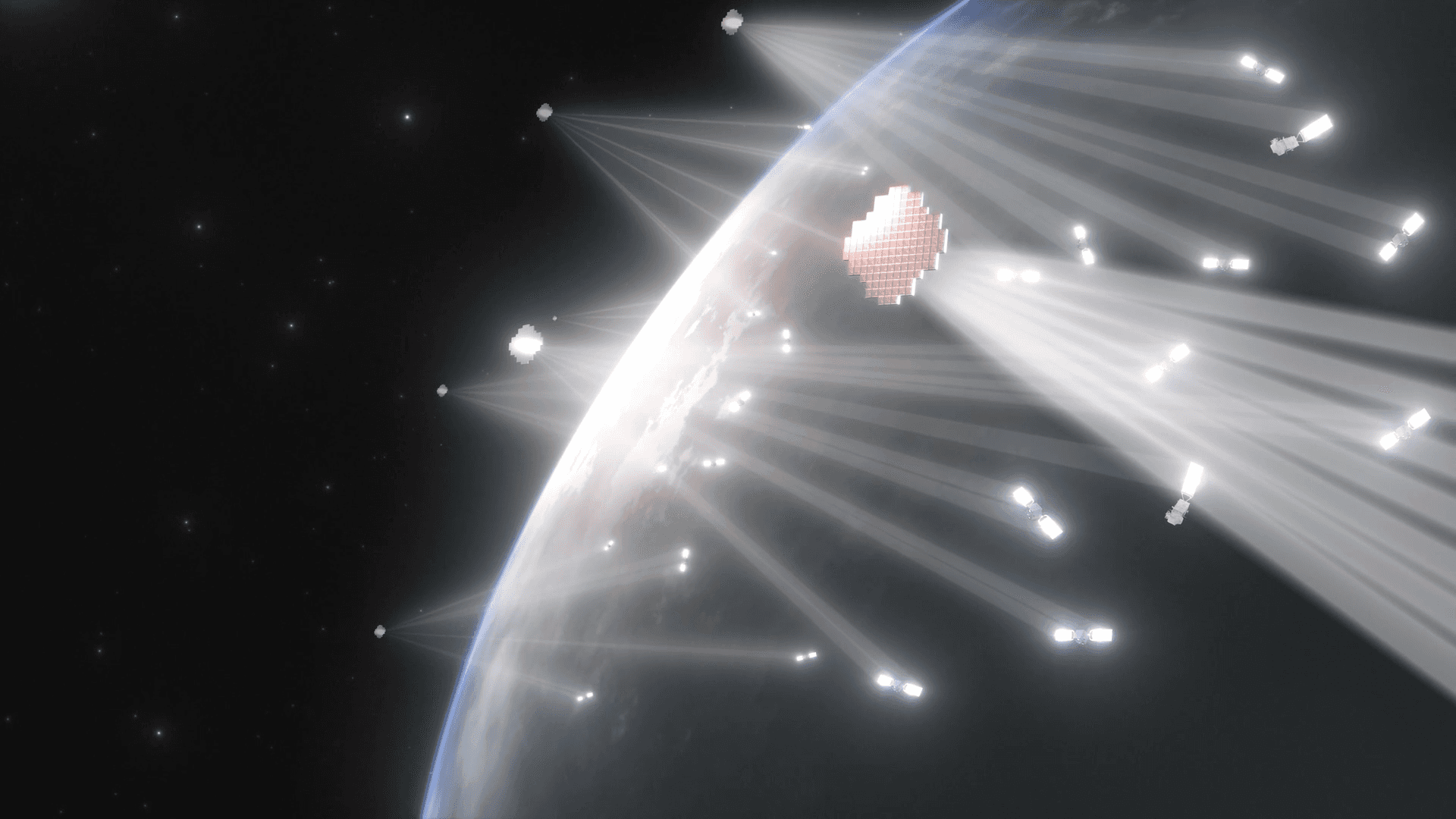

This case study provides a quantitative analysis of how Star Catcher’s orbital energy grid (shown visually in Figure 1 below) enables industrial-scale, economically-viable orbital data centers in the near-term. Our conclusions summarized in Table 1 below, and detailed thereafter, are built on a robust techno-economic systems engineering model.

The key finding: with Star Catcher, you get up to 3.5 orbital data centers for the cost of one.

Table 1: Orbital data center revenue multipliers and reduced collision risk by use case.

ODC use case | Revenue multiplierwith Star Catcher | Collision risk reductionwith Star Catcher |

|---|---|---|

Hyperscaler AI | 1.3 – 3.5x | 30 – 85% |

Edge computing | 1.9 – 3.5x | 60 – 85% |

Cloud(e.g., Content Distribution Networks) | 2.3 – 3.5x | 70 – 85% |

Figure 1: Star Catcher's optical power beaming orbital energy grid enables the same compute in 1/10th size, with no retrofit required.

Part 1: How power and mass drive the orbital data center equation

The AI boom has driven enormous resources into the development of more AI-ready data centers. However, these data centers are quickly running into regulatory, environmental, and infrastructure constraints. The space environment alleviates these concerns thanks to unlimited deployment area, near-continuous solar flux, and radiative cooling direct to the vacuum of space.

Industry projections now target gigawatt-class data centers in orbit, each requiring roughly one million AI-ready GPUs and solar arrays spanning 600 football fields. Whether this scale is accomplished in a single monolithic bus or distributed across many smaller spacecraft in configuration (numbers in the thousands or millions such as SpaceX or Google’s Project Suncatcher are intending), there is a problem threatening to pop this bubble before the first gigawatt comes online: the average satellite bus today generates less than 1 kW of power, roughly the power a single NVIDIA H100 GPU needs.

While many are arguing that we can, without limits, build gigawatt-class data centers in space in the next few years, the power problem is a fundamental limitation of today’s satellites that, if not addressed, will relegate space data centers to a once-ambitious vision curtailed by the popping of a bubble.

As hyperscalers have learned on Earth, power is the fundamental enabler, and primary constraint, that unlocks or stalls new technologies and economies of scale.

At the same time, spacecraft economics remain deeply mass-constrained. Even with future reductions in launch cost, between price and accessibility of launch and the cost of the spacecraft itself, kilograms will still determine capital expenditure. For orbital compute, the key design trade therefore becomes the mass-per-power ratio: how many kilograms of spacecraft must be launched to deliver each kilowatt of usable compute.

The mass-per-power bottleneck

Top of the line AI compute, such as NVIDIA’s H100 GPU, requires about 1 kW of power with its supporting compute components, 700 W just for the GPU itself. The average satellite today (excluding Starlink) generates about 1 kW of average power, which is distributed throughout all spacecraft subsystems and payloads. Simply put, there’s very little room in today’s architectures for AI workloads.

Figure 2: CNBC covering the Starcloud-1 deployment.

In 2025, orbital data center startup (and Star Catcher customer) Starcloud launched Starcloud-1 (seen in Figure 2 above), carrying a single NIVIDIA H100 GPU on an Astro Digital Corvus Micro bus. This mission was a landmark in space compute, with “100x more powerful GPU or AI compute than has been seen in space before” (Starcloud). However, the Corvus Micro bus only generates ~100 W at peak sun-pointing. After accounting for spacecraft subsystems, our models suggest that Starcloud-1’s GPU likely ran below a 10% duty cycle.

Scaling to gigawatt-class ambitions will require each data center to host one million continuously-running H100-equivalent GPUs. With current satellite power budgets, you’d need the equivalent of one million spacecraft. For that to be worthwhile, they have to be competitive with Earth data centers (or provide unique space-based value in edge computing and content distribution networks), and today they’re not even close.

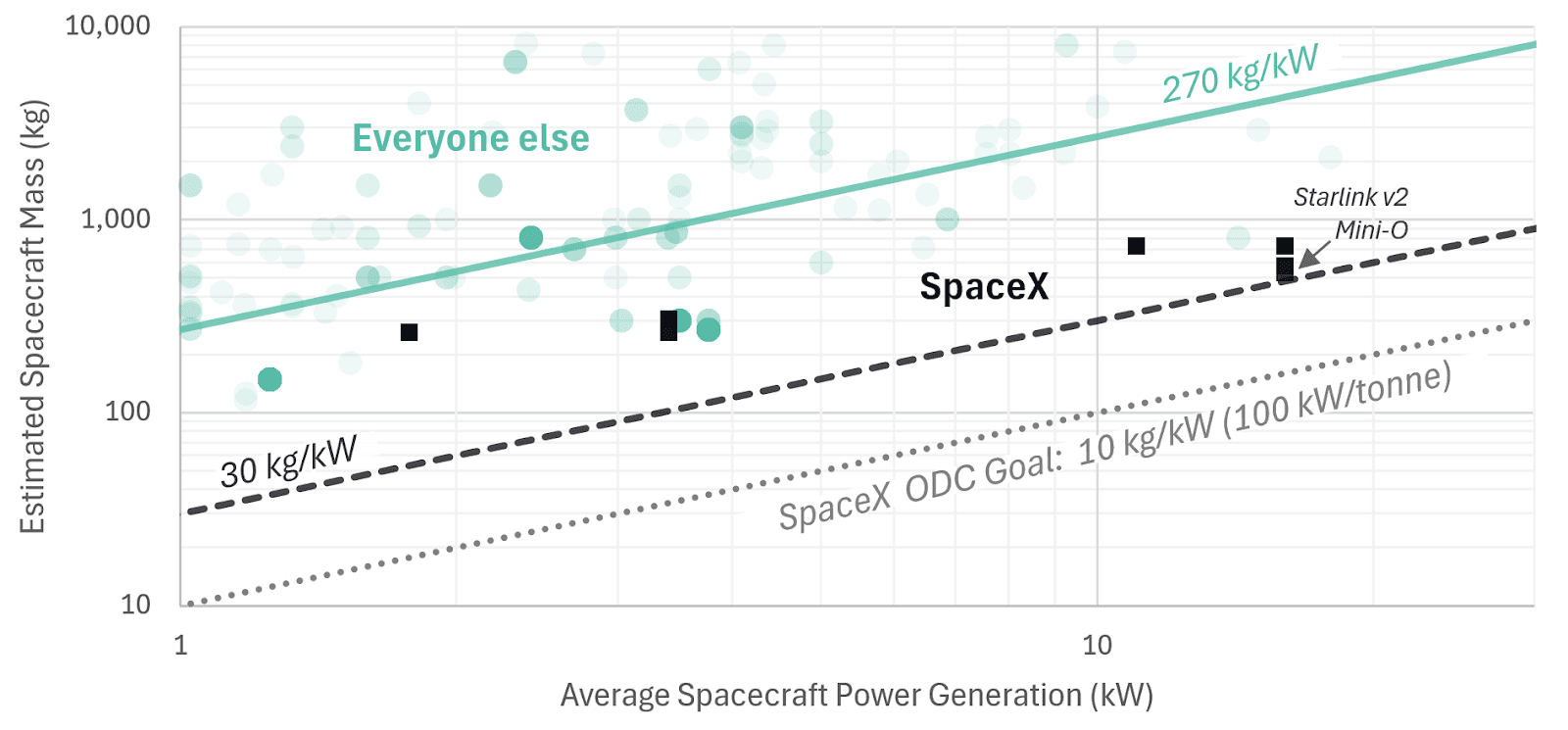

SpaceX is an outlier, with some Starlink satellites dedicating an estimated ~50% of spacecraft mass to power generation vs. 5-15% in conventional satellites.

SpaceX’s Starlink ran into similar terrestrial competition with telecommunications. Their solution was to hyper-optimize around power infrastructure at massive economies of scale, unmatched by any other space company today. While conventional satellite buses only spend ~5-15% of their mass on power generation, roughly 50% of the mass of a 575 kg Starlink v2 Mini Optimized (Mini-O) satellite is dedicated to power generation thanks to a solar array wingspan longer than a basketball court and iterative design optimization with their launch vehicles.

Based on our calculations, this enables them to achieve ~28 kW of peak power, and ~15-20 kW average power which results in ~30-40 kg/kW mass-per-power. Compared to recently launched (2020-2025) LEO spacecraft with >1kW of power generation, the Starlink v2 Mini-O is roughly 7-9x more power dense (see Figure 3 below), providing a brute force path to gigawatt-class data centers in the near-term.

Figure 3: 2020-25 LEO Spacecraft average power vs. mass showing SpaceX’s Starlink spacecraft outperforms the market, setting up a competitive orbital data center debut.

For tech giants like Google, Amazon, and others, replicating that vertical integration to maintain their cross-domain cloud dominance means years of catch-up. For venture-backed startups, it’s difficult to compete without comparable capital and manufacturing scale.

But there’s another approach: shared power infrastructure.

Part 2: How Star Catcher’s grid energizes ODCs

A viable path to sustainable, industrial-scale orbital data centers

ODC payloads are not volume or mass constrained. 300-500 kilogram commoditized satellite buses provide sufficient payload mass and volume to support entire data center racks, though the power provided is <10% of what is needed to operate them. Similarly, smaller spacecraft like Astro Digital’s Corvus Micro platform can accommodate multiple server blades but lack the power plant to operate them at economically viable uptimes.

Extending the same power grid model that powered revolutions in 5G and AI on Earth to space fundamentally changes the economics for everyone who doesn’t have Starlink-scale vertical integration.

Star Catcher’s orbital energy grid wirelessly beams power to existing spacecraft solar panels, allowing them to generate up to 10x the power thanks to recent advances in high-power lasers, precision tracking systems, and lightweight optics.

A 100 W bus becomes 1 kW-capable, meaning even spacecraft buses flying today, such as Astro Digital’s Corvus Micro platform, could power a NVIDIA GPU at 100% duty cycle. Average 1,000 to 1,500 W buses leap to 15 kW systems where they can power a quarter data center rack at economically viable uptimes of up to 100%.

Recognizing this, both Starcloud and Astro Digital, amongst other satellite platforms, orbital data infrastructure companies, and other space verticals have already signed Power Purchase Agreements with Star Catcher.

By receiving scalable, on-demand power up to 10x solar flux, orbital data centers can dramatically increase revenue generation, decrease satellite build costs, constellation size, and deployment timelines, reduce collision risk, operate at 100% duty cycles, and optimize their thermal management.

We are at an inflection point where investment interest and engineering can align to create this entirely new market that will change the way we live on Earth. To help realize this, we developed a techno-economic systems engineering model to quantify the benefit of Star Catcher’s orbital energy grid powering industrial-scale, economically-viable orbital data centers. The following sections explore advantages regarding solar panel sizing, orbital regimes, mass-per-power optimization, and even thermal.

Sizing & orbits & flux, oh my!

Current projections for gigawatt-class data centers (not powered by Star Catcher’s orbital energy grid) would require >600 football fields worth of solar panels, before taking into account power storage needs. Whether deployed as a single monolithic data center or a swarm of smaller ones, this power infrastructure investment is a complex and supply chain-halting capital expense.

The good news is, solar array size is determined by the local solar flux (physics, not engineering). Missions such as BepiColombo in the inner solar system have already demonstrated that standard Earth solar panels can operate at several times Earth’s local solar intensity, producing more power per square meter with much smaller arrays. Star Catcher’s optical power beaming provides the same advantage, increasing that flux here in Earth orbit by an order of magnitude, allowing operators to multiplicatively decrease their solar panel size needed to achieve the desired power level. Star Catcher effectively brings the Sun closer to you.

To illustrate, we modeled a feasible near-term 20 MW-class orbital data center (the average capacity of a data center on Earth today across applications). Figure 4 below interactively explores the total aggregate footprint reduction for a 20 MW data center, in terms of football fields, with Star Catcher-powered architectures ranging from 2-10x additional solar flux (Star Catcher power is on-demand and scalable up or down).

By enabling up to 10x power generation from existing solar panels, Star Catcher enables an order of magnitude reduction in array sizing, resulting in a lighter simpler system with massive savings that propagate throughout the structure, propulsion, and overall bus design.

Taking it a step further, reduced solar array area shrinks collision risk by up to 85% near-term, enabling safer orbital data center deployment in ideal orbits.

As Table 2 explores, lower orbits (500-1,000 km) offer reduced latency and are better suited for near-term value drivers in orbital data centers such as near-real-time applications (including emerging, complementary capabilities like edge computing with direct-to-cell (D2C) connectivity where spacecraft must close the link budget to handheld devices on the ground). But these lower orbits are also the most congested, increasingly crowded with megaconstellations and debris. On the other hand, higher orbits (1,000-2,000 km) provide a greater orbital volume and more continuous solar access with fewer and shorter eclipses, but are more difficult to access and suffer from higher latency and increased radiation exposure.

Table 2: Trading lower vs higher LEO altitudes for data center placement between 500-2,000 km.

Lower Orbits (500-1,000km) | Higher Orbits (1,000-2,000km) | |

|---|---|---|

Latency & Signal | Low latency & reduced uplink power requirements, supporting near-real-time operations and comms | Slowed by up to 4x greater latency & requires 16x more powerful uplink transmitter (difficult D2C adoption) |

Orbital Congestion | Extremely crowded (megaconstellations and debris) with extremely high collision-risk for large area assets. Avoidance maneuvers require significant increase in propulsion mass, thrust, and power | Much less crowded with much greater separation volume. Avoidance maneuvers are less frequent requiring less increase in propulsion mass, thrust, and power |

Launch Access | Easy to access, lots of launches, low fuel required | Harder to access, infrequent launches, more fuel required |

Radiation Environment | More benign for electronics lifetime and computation reliability | Harsher radiation environment hurts compute lifetime and reliability |

Solar Access | Less continuous sunlight, max eclipse ~38% of an orbit, fewer orbit options to avoid/shorten eclipses | More continuous sunlight, max eclipse ~28% of an orbit, more orbit options to shorten/avoid eclipses |

Star Catcher power beaming enables the best of both worlds: ODC satellites can operate in ideal lower orbits while leveraging power beaming to reduce solar array size and kg/kW.

Star Catcher allows orbital data centers to focus on competing and computing where it matters, with a fraction of the cost and risk.

To illustrate this, we examine a 20 MW ODC as shown above due to its similarity to state-of-the-art terrestrial data centers. Furthermore, the focus is on a cluster of spacecraft rather than a single spacecraft as a single 20 MW spacecraft is currently too massive to fit within the launch capacity of even Starship-class capabilities. Via clustered architectures like that of Google’s Project Suncatcher (Figure 5, Exploring a space-based, scalable AI infrastructure system design), 20 MW can be achieved easily in a 81 satellite configuration, with roughly 250 kW per spacecraft.

Figure 5. Source: Google Research's Project Suncatcher - An ODC concept that avoids in-space assembly with a tight cluster of smaller spacecraft leveraging high-bandwidth inter-satellite laser links, in an 81-satellite configuration. The cluster radius is R=1 km, with the distance between next-nearest-neighbor satellites oscillating between ~100-200m, at a 650km orbital altitude.

Via traditional approaches, 250 kW of average power generation would require each spacecraft to have 1,100 m² solar arrays (more than a quarter acre), larger than any that have ever been deployed commercially. Given 10x solar flux from Star Catcher, each 250 kW node would require only 110 m² solar array area. Notably, this is roughly achieved today with Starlink solar arrays (105 m²), while commercially available satellite buses such as K2 Space’s Mega bus offer this solar array class to the market.

This combination of Star Catcher power and commercially emerging satellite buses provides a near term actionable approach to fielding a 20 MW ODC architecture with superior kW/tonne performance.

With 10x power from Star Catcher, a K2 Space Mega bus could go from 30 kW peak power to 300 kW average power. If the Mega bus mass with the right compute payload and thermal approach, in total, has a mass below 3,000 kg, suddenly this spacecraft is capable of breaking the 10 kg/kW (100 kW/tonne) goal set by SpaceX before Starship even comes online.

“Wait, you guys want this to make money?” The value of mass-per-power optimization

Taking it one step further, those footprint savings translate into direct and secondary mass and cost savings that multiply the return on investment and accelerate time-to-revenue with the supply chain of today.

As we’ve seen, the key design trade is the ODC’s mass-per-power ratio: how many kilograms of spacecraft must be launched to deliver each kilowatt of usable compute.

If the goal is to provide unique and cost-competitive value, orbital data center platforms must evolve toward aggressive spacecraft mass-per-power optimization.

The following sections analyze the mass-per-power of state-of-the-art compute against spacecraft power generation systems today, and how “plugging” into Star Catcher’s orbital energy grid multiplicatively improves the bottom line and revenue potential.

Massive compute = massive power = massive… mass

As compute is the key value proposition of orbital data centers, it is imperative to optimize around its needs and relative mass-per-power. Table 3 compares representative compute mass-per-power benchmarks across state-of-the-art terrestrial infrastructure, current orbital demonstrations, and futuristic goals. Lower mass-per-power values indicate more economically-viable benchmarks for mass-constrained space applications.

Table 3: Compute mass-per-power benchmarks on Earth and in space. Lower numbers are better.

Domain | Platform | Compute Benchmark (kg/kW) | Status | Notes |

|---|---|---|---|---|

Earth | AI Server Racks | 20.0 | Operational | Generic 1,200 kg rack at 60 kW for AI workloads |

Earth | NVIDIA DGX H100 | 11.5 | Operational | Top-of-the-line, 130.5 kg at 11.3 kW max for 8 GPUs |

Space | Starcloud-1 | 30.0 | Operational | Prototype, 30 kg payload with ~1 kW of power |

Space | SpaceX Goal* | 2.6* | Claim | 10 kg/kW (100 kW/tonne) goal with 20% mass dedicated to compute at a 1.3 PUE |

Space | Near-term standard | 9.2 | Predicted | 20% mass optimization of the NVIDIA DGX H100 system |

*SpaceX achieving their 100 kW/tonne goal requires multi-generation improvements in today’s top-of-the-line compute architectures, suggesting a decade of terrestrial development is first required.

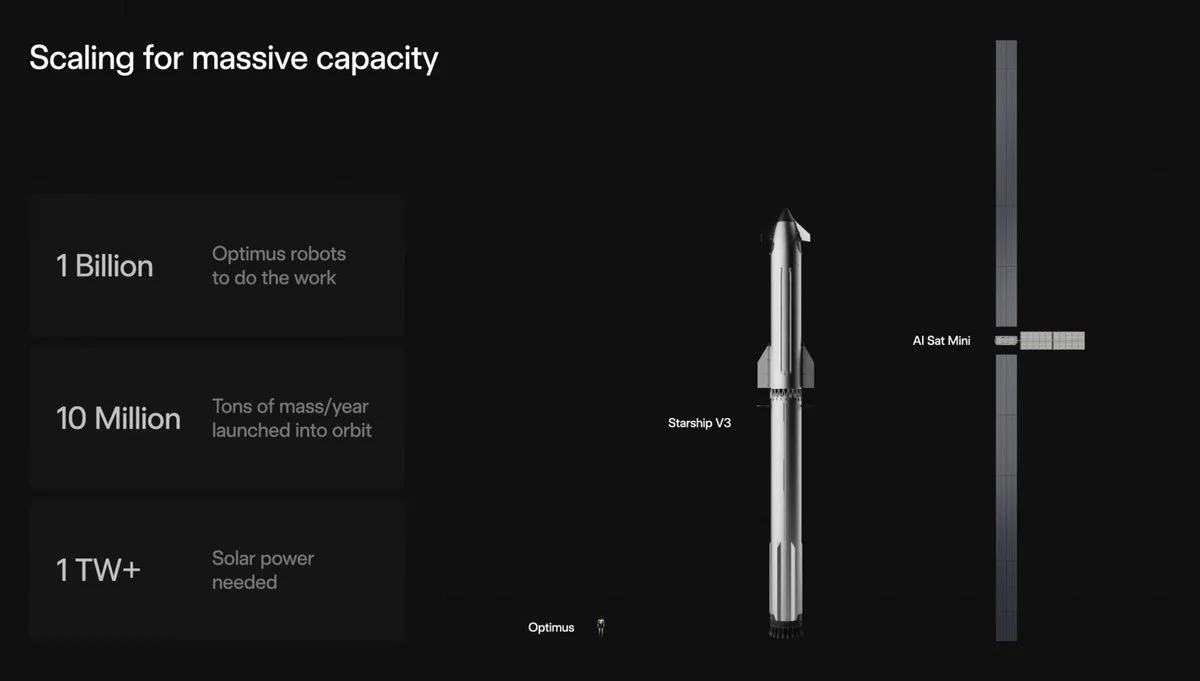

Elon Musk has claimed that SpaceX will achieve 100 kW/tonne all-in for orbital data centers (10 kg/kW for the entire spacecraft in our modeling convention). As the relative benchmarks suggest, today this barely covers the mass-per-power of the compute, let alone an entire spacecraft with massive record-breaking solar arrays.

It is worth noting that data centers on Earth are typically rated by their total power consumption, rather than the draw of their IT equipment alone, with a metric called Power Usage Effectiveness (PUE). PUE is the total ratio of facility (or satellite) power over IT (compute) equipment power. Average ranges for hyperscaler, edge computing, and cloud data centers vary from PUE ~1.1 to 2. Our modeling in the sections below uses an average PUE of 1.3 to focus on the competitive end of mass-per-power performance.

To convert the compute benchmarks in Table 3 into facility-level benchmarks (or in this case satellite-level), we divide the near-term standard 9.2 kg/kW by the 1.3 PUE to derive ~7 kg/kW for compute mass relative to total spacecraft power. With this benchmark for our near-term compute payload, we can now model the surrounding spacecraft.

Spacecraft mass-per-power breakdown

In this analysis, we baseline a 250 kW orbital data center node satellite, 81 of which make up a 20 MW orbital data center. This section explains the mass assumptions we derived.

State-of-the-art deployable structured solar arrays achieve roughly ~10 kg/kW, comparable to systems like Starlink v2 Mini-O. While lower values than 10 kg/kW for solar arrays can be achieved at the cell component level (and some statistics may even include some structure), when considering the required integration mass, structural, and stability characteristics for the scale of arrays that orbital data centers require (especially with frequent conjunction avoidance maneuvers) we found the 10 kg/kW assumptions to be the most accurate for near-term orbital data center architecture. Eclipse recharge margins increase required array sizing by ~38%, for the total solar array at ~13.8 kg/kW. Batteries sized for worst-case eclipses in LEO add another ~10 kg/kW. Altogether, the electrical power system contributes roughly ~23.8 kg/kW.

Assuming the thermal mass is well-optimized at the associated 250 kW scale, we leverage several model trades to define an active thermal system around 2.5 kg/kW to raise compute-radiator temperature from ~100°C to ~150°C within coefficients of performance (COP) compatible with the 1.3 PUE defined above.

We estimate for this scale that remaining bus subsystems are approximately 7.8 kg/kW. This results in the compute (7 kg/kW) and power systems comprising ~75% (30.8 kg/kW) of the spacecraft, with an overall spacecraft mass-per-power of approximately 41.1 kg/kW.

The result is a roughly 10 tonne (10,000 kg), 250 kW average orbital data center node with mass-per-power optimized competitively.

Even with a well-optimized bus, the power system outweighs the compute 3.4-to-1. Delivering compute at scale requires launching large amounts of power infrastructure mass.

‘Plug’ into the grid: reduce mass, multiply revenue

Star Catcher changes this trade. By offloading peak power to the orbital energy grid, ODCs can dramatically reduce their power system mass.

Figure 6 below interactively quantifies this for our baseline 250 kW ODC. Note that with peak power offloaded, the spacecraft bus can be optimized to support the compute payload, resulting in secondary mass reductions propagating throughout structure, propulsion, and bus design. 2x solar flux reduces the ODC mass by over 50%, with further savings at 3x solar flux.

Scaling the system for maximum compatibility with Star Catcher’s orbital grid at 10x solar flux reduces the overall spacecraft mass by almost two-thirds, or only 37% of the original mass.

Because spacecraft mass strongly correlates with both manufacturing and launch cost, any mass reduction translates directly into overall cost reduction. Given a linearly modeled mass-vs-capital relationship, and incorporating an aggressive but reasonable 20% learning rate (slightly better than the 15% aerospace average), we derive the resulting revenue multipliers in the range plot, Figure 7, shown below.

If operators more aggressively optimize around always-on grid augmented power at 10x solar flux, they can get up to 3.5 data centers for the price of one.

Most of these benefits can be seen with only 2-3x average solar flux from Star Catcher’s orbital power grid on-demand, using existing solar panels, to meet higher than average compute demand, just like users can always pull more power from the terrestrial grid.

Finally, these revenue multipliers further compound when one incorporates the capital and competitive advantage of decoupling the tech refresh of short-life compute clusters (3-5 years or shorter competitive lifetimes) vs the relatively long-life power infrastructure (decade or more scale).

Initial analysis of these trends suggest first adopters of an orbital energy grid who combine these factors over a few generations gain a capital efficiency and revenue moat akin to what SpaceX has achieved in launch and LEO telecommunications.

SpaceX Validation

In a twist of fate, just before we published this analysis, SpaceX validated our analysis when they released high-level specifications on their upcoming 'AI Sat Mini' (seen in Figure 8 below), which Elon Musk promised would supply over 100 kW of power to AI-processors (seemingly not including the supporting system and bus power).

Figure 8. SpaceX unveiling an unfurled AI Sat Mini compared to Starship V3 as the basis of near-term orbital data center compute.

Analyzing the dimensions of the released AI Sat Mini body with engineering assumptions based on the SpaceX component supply chain, the 124.4 meter tall Starship gives us a 170 meter length, comprised of two solar arrays totaling ~890 m² with an electrical power output ~250 kW using their preferred silicon cells. The AI Sat Mini design assumes 100% sun-pointing with silicon, while our case averages between silicon and gallium solar cell efficiency while including and averaging between eclipse scenarios, meaning that our assumed baseline solar array area is slightly higher as seen in Figure 9 below. The over 100 kW claim for AI-processors suggests a first-generation PUE (power-usage-effectiveness) of the satellite between 1.4 to 2.0 depending on where the accounting occurs, in-line with or worse than our 1.3 PUE assumption. The AI Sat Mini radiator sized for this 250 kW total has a one-sided area ~91 m² (182 m² two-sided area) requiring radiator temperatures around 130 °C, in line with our rough radiator sizing and breakeven trades, running GPU-style compute hot up to ~100 °C before using heat pumps to shrink the required radiator mass to minimize cost.

The close alignment between this newly released SpaceX design and our independently derived case study reinforces a larger point: the leading players in orbital compute are being pushed toward similar architectures by the same physical constraints. That makes power infrastructure the real differentiator.

Star Catcher reshapes the economics of orbital data centers

Decoupling computation from power enables orbital data centers to move from bespoke, supply-chain constrained systems into scalable infrastructure, allowing operators to fly lower and compete earlier, and deploy multiple data centers at the cost of one, all while focusing on their core product: compute.

Figure 10. A rendering of Star Catcher's orbital power grid beaming concentrated light with 2-10x power to orbital data center solar arrays with a fraction of the original area.

Star Catcher's in-space power grid doesn't just optimize orbital data center architecture, it evolves it, opening a path to multiplicative gains in compute density and economic performance.

Bonus chapter: Dr. Thermal

OR: How I learned to stop worrying and love T^4

If you’re still reading this hoping to find more on everyone’s favorite comment section hot topic, this is the thermal section for you. A common objection to orbital data centers is the thermal “issue”. Without air or water, how do you dump waste heat? On Earth, data centers rely heavily on conduction locally then convection using fluids (air and water) to carry heat away to the outside environment. In space, the vacuum eliminates convection entirely, leaving radiation as the primary mechanism for rejecting heat. At first glance, radiative cooling is often assumed to be “too slow.” But in orbit, the physics is favorable.

Space provides an effectively infinite heat sink, and radiative heat rejection scales strongly with temperature.

The Stefan–Boltzmann law (see the equation in Figure 11 below) shows that emitted thermal power increases with the fourth power of absolute temperature, meaning modest increases in operating temperature can dramatically increase heat rejection. Even today, satellites like Starlink dissipate 10+ kW of waste heat through passive thermal design, without the need for large dedicated radiator wings.

Figure 11: The Stefan-Boltzmann law governs how heat is radiated as a powerful function of temperature.

Table 4 shows that even at standard GPU operating temperatures, passive radiative cooling is surprisingly effective: typical idle-to-high-load regimes (40-100°C) support roughly 940-1,900 W/m² of heat rejection with no active thermal machinery. For comparison, solar arrays only generate 300-400 W/m² while sun-pointing (~200-270 W/m² average) making radiative cooling generally more area-efficient than power generation.

Table 4: Computation-driven temperature and the resulting heat rejection that show the Stefan-Boltzmann law enables highly efficient heat rejection that scales significantly.

Thermal Method | Mode of Heat Source | Bus-Radiator Temperature | Two-Sided Bus-Radiator Heat Rejection | Ratio of Solar-Array to Bus-Radiator Areas |

|---|---|---|---|---|

Passive to bus | GPU Idling | 40 °C | 940 W/m² | 4:1 |

Passive to bus | GPU High-load | 85 °C | 1,600 W/m² | 7:1 |

Passive to bus | GPU Max | 100 °C | 1,900 W/m² | 8:1 |

Active to radiator | Radiator w/ heat pumps, good COP | 150 °C | 3,100 W/m² | 13:1 |

Active to radiator | Radiator w/ heat pumps, mid COP, long term potential | 200 °C | 4,900 W/m² | 20:1 |

As compute scales, active thermal rejection at higher temperatures pushes heat rejection into an entirely different regime, reaching several kilowatts per meter squared. This makes waste heat rejection a tractable engineering problem, optimizing a heat pump’s coefficient of performance (COP) against the spacecraft’s Power Usage Effectiveness (PUE), rather than a fundamental physics limitation.

For near-term orbital compute platforms, thermal management is therefore less of a fundamental blocker than often assumed, especially when compute is distributed across spacecraft surface area rather than concentrated into megawatt-class volumes. At larger scales, mass-optimized radiators and active cooling loops may become necessary.

Star Catcher provides an additional systems-level advantage here: by delivering power directionally, operators can decouple power collection from thermal pointing.

Radiators can remain oriented toward cold deep space while power collection is handled independently, reducing thermal complexity and enabling higher sustained compute operation.

Additionally, the reduced solar panel mass and surface area enabled by Star Catcher allow for increased radiator mass and surface area to be applied within the same mass and volume footprint, opening up opportunities for increased compute density within each orbital data center. Further gains are possible with large scale systems, including heat pumps to raise the temperatures and shrink radiators.

About Star Catcher

Star Catcher is building the first power grid in space — beaming concentrated solar energy on demand to satellites in orbit. By eliminating power as a constraint on spacecraft design and mission capability, Star Catcher is unlocking a new generation of space operations for commercial, civil, and national security customers. Learn more at www.star-catcher.com.

For press inquires: press@star-catcher.com

For general inquires: info@star-catcher.com